To ensure autonomous vehicles’ safety, countries still need to develop adequate infrastructures, make laws and establish new standards to regulate the industry.

But the greatest responsibility for the safe and secure operation of self-driving vehicles rests with car manufacturers, OEMs, and suppliers. These companies drive automotive innovation and use machine learning (ML) to develop next-gen autonomous vehicle systems.

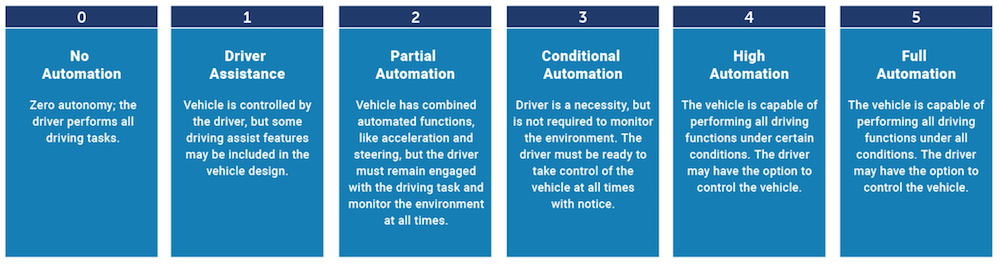

This article will explore different use cases to better understand how machine learning (ML) and deep learning (DL) technologies are used to build advanced systems for different levels of vehicle automation.

Autonomous vehicles (AVs) enable people with no driving experience or people with disabilities to travel with luxury and flexibility, allowing them to read, relax, or even work in the passenger seat, increasing efficiency. Radar, sonar, LiDAR, odometry, GPS, and inertial estimation units are some of the sensors used by AVs to assess the current environment. Advanced control systems decode material data such as roadblocks and key markers to determine appropriate course headings.

Like other industries, the COVID-19 pandemic has had an equally profound impact on the AV industry. In China, predicted to be the world’s largest AV market, connected car sales declined 71% at the start of the pandemic in February 2020. The situation was similar in other large markets. In Europe, the drop was 80%, and in the United States – 47%. However, production declined to previous levels, sometimes even exceeding production levels before the first quarter of 2020.

Most AVs currently available on the market have Level 2 and Level 3 automation, which means these vehicles have partial (lane departure warnings, collision detection, cruise control, ADAS) or conditional driving automation (environmental detection). Tesla Autopilot and General Motors Super Cruise systems both qualify as Level 2 automation, while the 2019 Audi A8L qualifies as Level 3 automation.

However, based on different AV market growth estimations, it won’t take long for Level 4 (high driving automation) and Level 5 (full driving automation) AVs to hit the market.

Determining how to deliver safe, economical, and practical driverless cars is one of our era’s most immense technical challenges. Machine learning helps automotive companies meet this challenge. How? Let’s explore it in this article.

While most Level 4 and 5 AVs are still in the prototyping and testing phases, ML is already being applied to several aspects of driverless technology. And it looks like this will also play an essential role in future developments.

Now let’s review some use cases for ML in AVs.

Neural networks can recognize patterns to be used in self-driving cars to monitor the driver and their behavior. For example, facial recognition can be used to identify a driver and check if they have a right to use the car, which can help prevent unauthorized use and theft.

The autonomous car system can also use occupancy detection algorithms to help optimize the experience for others in the vehicle. This could mean the automatic adjustment of the air conditioner according to the number and location of passengers.

Deep learning frameworks like Caffe and Google TensorFlow use algorithms to train and enable neural networks. They can be used with image processing to learn about objects and classify them so that a vehicle can easily respond to its environment. Such deep learning algorithms are used for lane detection, where AI determines the turning angles required to avoid objects or stay in the lane and, therefore, accurately predicts the path ahead.

Neural networks can also be used to classify objects. Using machine learning, they can be trained to recognize a particular shape of various things. For example, they can distinguish between cars, pedestrians, cyclists, lamp posts, and animals.

Visualization is also used to assess the proximity of an object and its speed and direction of movement. An autonomous vehicle can use ML, for example, to calculate the clearance around it and then change lanes – all to improve maneuvering.

Machine learning is also implemented for higher levels of driver assistance, such as perceiving and understanding the world around a car. This mainly involves using camera-based systems for object detection and classification, but there are also developments in LiDAR and radar technologies that will be described later in the article.

One of the biggest problems for autonomous driving is the misclassification of objects. The vehicle’s system typically interprets the data collected by various in-car sensors. However, with a difference of just a few pixels in the image produced by the camera, the system may mistake a stop sign for a speed limit sign.

AV systems can improve perception and identify objects with greater accuracy with improved and more generalized training of machine learning models. Training an ML system by providing it with more varied inputs on the critical parameters on which it makes decisions helps to better validate the data and ensure that what is being trained matches the reality. Thus, there is no strong dependence on one parameter or a key set of details that could otherwise lead to a certain system conclusion.

If the system is provided with data that is 90% about a blue car, then there is a risk that it will identify all blue objects as blue cars. This “overfitting” in one area can skew the data and therefore skew the output; thus, varied training is vital.

Each sensor modality has its strengths and weaknesses. For example, you get the high-quality texture and color recognition with visual input from cameras. But cameras are sensitive to conditions that can impair the field of view and visual acuity, just like the human eye. Thus, fog, rain, snow, and lighting conditions or lighting changes can impair the perception and, hence, the system’s detection, segmentation, and prediction.

While cameras are passive, radar and LiDAR are active sensors and are more accurate than cameras when measuring distance.

Machine learning can be used individually on the output of each sensor modality to better classify objects, determine distance and movement, and predict the actions of other traffic participants.

With radar, signals and point clouds are used to create better clustering and more accurate 3D images of objects. Likewise, ML can be applied to high-resolution LiDAR data to classify objects.

Yet, combining sensor outputs is an even more reliable option. Camera, radar, and LiDAR can be combined to provide a 360-degree view of the vehicle. By combining all the outputs from different sensors, we get a complete picture of what is happening outside the car. And ML can be used here as an additional processing step on this combined output of all these sensors.

For example, an initial classification can be made using images from a camera. It can then be combined with the LiDAR output to determine the distance, magnify what the car sees, or check what the camera classifies. After combining these two outputs, various machine learning algorithms can be run on the combined data. Based on this, the system can draw additional conclusions that will help in detection, segmentation, tracking, and forecasting.

One of the disadvantages of using machine learning to detect and classify objects is that massive datasets are required. As discussed earlier, autonomous car systems need to be trained on a wide range of scenarios to prevent distortion in the data. Corrupted data will not give real results, so a car can react to a situation in a completely different – and possibly dangerous – way compared to how the human mind would interpret it.

It takes time and enormous computational processing resources to get enough training to avoid such bias in the data. And there is also the time it takes to validate and verify the training and make sure ADAS is performing as expected in the various scenarios.

One aspect of driving that ML may not be able to handle is recognition from other road users. Drivers are accustomed to making eye contact to find out that a pedestrian will cross the road or gesture to another car to nudge up. But it is unclear how an autonomous system can replicate this and how ML should be trained. Until this puzzle is solved, and unless systems come close to the human emotional intelligence and instantaneous predictions needed to deal with situations like this, it will never be possible in autonomous vehicles.

Machine learning is also limited when training the system to respond to what humans naturally have. For example, the “sixth sense” that a car might be about to pull in front of you, or a truck might suddenly hit the brakes.

Letting the machine make its own decisions is a difficult prerequisite for many. System determinism is something that many people, with the possible exception of computer scientists, find problematic. Some people suspect that “cars will take over,” and their trust in self-driving vehicles is low. Despite predictions of fewer accidents and deaths with autonomous vehicles, confidence has been further eroded by incidents during testing, such as an Uber self-driving car hitting a pedestrian in Arizona or a Tesla autopilot driver crashing while playing a video game.

A fully autonomous system at Level 5 would require perfect functional safety, which is not easily guaranteed using machine learning alone. Due to the required amount and variety of training and the difficulty of replicating human intelligence, systems are not yet sufficiently capable of accurately detecting and classifying objects.

Vehicle powertrains typically generate a time series of data points. Machine learning can be applied to this data to improve motor and battery management.

With ML, the car is not limited by boundary conditions set at the factory. Instead, the system can adapt over time to the vehicle’s aging and respond to changes as they occur. ML allows boundary conditions to be adjusted as the vehicle system ages, the transmission changes, and the car gradually breaks in. Due to the flexibility of the boundary conditions, the vehicle can achieve more optimal performance.

The system can be adjusted over time by changing its operating parameters. Or, if the system has sufficient processing power, it can adapt to a changing environment in real-time. The autonomous system can learn to detect anomalies and promptly notify maintenance or warn of imminent motor control failure.

ML can be applied to data acquired by onboard devices. Variables such as engine temperature, battery charge, oil pressure, and coolant level are fed into the system. They are analyzed and provide insight into engine performance and overall vehicle health. Indicators that indicate a potential malfunction can then alert the system and its owner that the vehicle should be repaired or preventive maintenance performed.

Likewise, ML can be applied to data received from in-vehicle devices, ensuring that their failure does not lead to an accident. Devices such as sensor systems – cameras, LiDAR, and radars – need optimal maintenance; otherwise, safe travel could not be guaranteed.

Adding computer systems and networking capabilities to vehicles increases focus on vehicle cybersecurity. And that’s where machine learning takes center stage. In particular, it can be used to detect attacks and anomalies and then overcome them.

One threat to an individual vehicle is that a hacker can access its system or use its data. ML models help detect such attacks and anomalies in order to keep the vehicle, its passengers, and roads safe.

The possibility of malicious hacking of the autonomous vehicle classification system is not excluded. Such an aggressive attack could deliberately cause the vehicle to misinterpret the object and misclassify it.

An attack can lead to misclassification of the vehicle, such as when a stop sign is perceived as a speed limit sign. Machine learning can be used to detect such attacks, and vendors are beginning to develop defensive approaches to work around them.

The goal is to provide ADAS with a more versatile way of making decisions. Using training to avoid overfitting avoids being heavily dependent on one key feature or a set of them. Thus, since the system has a large amount of knowledge, maliciously processed input data will not lead to incorrect results or wrong perceptions.

Preventing hacking of the connected networks on which cars operate is of paramount importance. At best, multiple jailbroken vehicles can stop and cause a traffic jam. But in the worst case, an attack can lead to serious collisions, injury, and death.

More than 25 connected car hacks have been posted since 2015. In the largest software vulnerability incident to date, Chrysler had to recall 1.4 million vehicles. This vulnerability meant that a hacker could take control of the car, including the transmission, steering, and brakes.

There is also a potential market for vehicle-generated data. You can get data about passengers in the car, their location, and their behavior. It’s estimated that the vehicle-generated data market could be worth $ 750 billion by 2030. While this data is of interest to automotive OEMs and carmakers, such valuable data also attracts hackers.

Therefore, developing systems that better support AV cybersecurity is vital. Each vehicle contains up to 150 Electronic Control Units (ECUs) and takes about 200 million lines of code to run. Such a complex system increases vulnerability to hacking.

In Europe, the United States, and China alone, there will be about 470 million connected vehicles on the roads by 2025, so the wireless interfaces they use must be secure to prevent scalable hacker attacks. Those who supply computer systems for autonomous vehicles must ensure that their systems are safe and uncompromising.

Concerns about personal data privacy are rife with autonomous vehicles. There is data related to the driver, family, or other people who use the car. Certain GPS information will allow you to track the vehicle or detail its trip history. Suppose a camera facing the cab is used to monitor the driver. In that case, personal information will be collected about each passenger in the vehicle, including where they went, with whom, and when.

Other types of data may be collected from outside the vehicle. This can affect other road users outside the vehicle who aren’t aware their data is being collected.

This raises understandable concerns about the legal side of data collection in AVs. Moreover, there is a risk of accidental data leakage or interception, which means that data can be accessed and used without applying these legal protections.

Data is also valuable in terms of competition. While the vehicle is in motion, data is continually collected about what it sees, ADAS’s classification methodology, final findings, etc. If accessed, it can be reconstructed to extract information and copy it to another environment.

There is a significant industry effort to secure real-world machine learning models. In particular, those that determine how the system classifies what it perceives, how it determines the speed of movement, and which direction it will move on.

Major tech companies and major car manufacturers are competing to develop proposals for autonomous vehicles. Each of them wants to be the first to enter the market in order to dominate this area. There is a lot of activity right now with the development of connected infrastructure, the emergence of 5G technology, the move towards new legislation to regulate the industry, and even the move towards mobility as a service (MaaS).

There are also changes in how machine learning is used in AVs. These are future trends that we believe will drive the self-driving car market.

Imaging radar is a high-resolution radar that can both detect and classify objects. In addition to the basic capabilities of radar, imaging radar also offers a high density of reflected points that it collects. Thus, it detects the object, determines its proximity, and uses all points to create the outlines of the objects that it picks up. Based on these schemes, you can start deciding on the classification of the reflected object.

An imaging radar has relatively low development costs. And for a sensor that takes full advantage of the radar in detection and provides classification capabilities, this is an exciting trend for the future.

Another emerging trend in machine learning for the automotive market is the transfer of technologies currently used for camera-based classification and detection to the LiDAR network. So instead of using a 2D image frame to define and classify objects, you can use 3D data from LiDAR reflections and then implement neural networks trained on that information. Thus, the system can define aspects such as the beginning and end of the road, location of intersections, or traffic lights.

This is made possible by using Convolutional Neural Networks (CNN), a deep learning class. Companies are already successfully working on this technology, and this is a very promising area.

Fully integrated microcontrollers (MCUs) will make the next-generation autonomous vehicles. At Level 5, microcontrollers allow the vehicle to detect a malfunction and then automatically stop the car safely without any intervention from the driver.

Current microcontrollers are derived from graphics or desktop computers and enterprise computing. These are not solutions specifically designed for automotive applications and are not powerful enough on their own. As a result, they are used with a separate high-performance processor. The MCU is next to the processor and communicates with the vehicle. This gives the system the direct interface to communicate safely with the vehicle.

The MCU can perform a high-performance integrated circuit safety self-test with diagnostics that ensure the health and optimum performance of the system-on-a-chip (SoC). The MCU acts as a core processor to overcome the inherent disadvantages of even high-end processors, as they are not designed for use in an automotive environment.

There is a tendency to integrate the capabilities of the microcontroller into the processor to create a single-chip solution. Thus, the functionality of the MCU will be built by manufacturers directly into the microcircuits. Thus, the products are specially designed to meet the higher handling requirements of unmanned vehicles. This eliminates the need for a separate chip, reducing costs and improving processor quality and capabilities.

Self-driving cars and trucks are definitely around the corner. Thanks to machine learning, these vehicles will increase accessibility for people with disabilities, ensure faster and more economical delivery to remote and outback areas, and, above all, will help improve road safety, reduce accidents and deaths.

But in order to change our life for the better, it is necessary to combine several factors. Car manufacturers will have to do their part to ensure these vehicles’ safety, reliability, and vitality. They certainly want to recoup their investment in research and development, but they will need to prove the safety of self-driving vehicles before consumers readily accept them.

Governments also have a role to play. They will need to pass laws on vehicle autonomy and driverlessness. Undoubtedly, different countries will approach this in different ways. And even within a country, different legislatures – for example, in the United States – may see things differently. Collaboration in this area will go a long way in helping the industry to provide standardized vehicles with similar or identical specifications.

Governments can also help encourage the use of autonomous vehicles. In the same way that many legislatures have encouraged the use of electric vehicles (EV) or those less harmful to the environment, tax breaks can encourage the use of autonomous vehicles. It would be beneficial for countries that do this because there will be fewer accidents and less pressure on healthcare. Likewise, we might see insurance companies offering lower premiums for self-driving cars, possibly on a decreasing scale depending on the level of autonomy.

Finally, collaboration around automotive innovation is key. Modern software development companies have experience with machine learning in their respective fields. Some specialize in camera sensing or LiDAR processing. Others have invested in melting sensor inputs or have experience building ML models for pathfinding and trajectory planning and converting them into highly personalized steering, acceleration and deceleration responses.

We at rinf.tech have a robust Automotive Division where we apply machine and deep learning models for custom ADAS and digital cockpit solutions. Some of the world’s leading automotive companies and OEMs have their dedicated software development teams for full-fledged AV solutions development as well as leverage our R&D Embedded Center to experiment with ML models training, neural networks, and complete pilot projects.

Car manufacturers and OEMs will need to establish technology partnerships with AI-specialized custom software developers to validate and future-proof innovative ideas, create pilots and MVPs, and turn them into full-fledged next-gen connected car solutions.